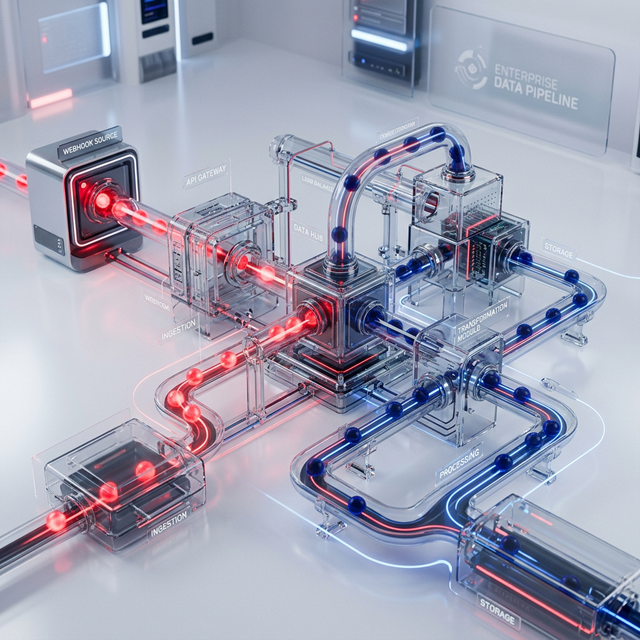

For most of the SaaS era, the conventional wisdom was clear: frontend is for display, backend is for processing. Automation logic — certainly anything involving external API calls, data writes, or webhook handling — belonged exclusively in server-side infrastructure. Express servers, dedicated worker processes, queue systems, and microservices architectures formed the operational backbone of every non-trivial automation workflow.

This architecture remains valid. For complex orchestration workflows, it is still the right approach. But the front-end tooling landscape of 2024-2025 has introduced a generation of primitives that legitimately challenge the assumption that backend infrastructure is always required for automation logic. Understanding when to use these primitives — and equally, when not to — is increasingly important for engineering teams balancing development velocity against operational complexity.

Server Actions: Next.js's Native Mutation Layer

Introduced in Next.js 13 and stabilized in Next.js 14, Server Actions allow functions defined with the "use server" directive to execute entirely on the server without requiring a separate API endpoint to be created, deployed, and secured. From the perspective of the React component tree, a Server Action appears as a regular async function. Under the hood, Next.js handles the HTTP serialization, form data deserialization, and server-side execution transparently.

For automation use cases, this is meaningful because it eliminates an entire category of operational surface area. Consider the common pattern: a form submission triggers a webhook to an external automation platform. With a traditional architecture, you need to: create an API route, deploy it separately from your frontend if using a decoupled architecture, secure it with CORS headers and origin validation, handle the webhook platform's signature verification, and manage the operational lifecycle of that endpoint. With Server Actions, the entire incoming pathway collapses to a type-safe function call inside your React Server Component — with full access to server-side secrets and no CORS complexity.

What Server Actions Enable and What They Don't

Appropriate for: Triggering n8n or Make.com webhooks from form submissions; writing directly to a database from a mutation; calling third-party APIs with secrets that must not reach the browser; running data transformations server-side before updating state.

NOT appropriate for: Long-running processes (Server Actions have a function execution timeout); streaming data continuously to the client; complex orchestration requiring retry logic, dead-letter queues, or parallel fan-out execution.

Server-Sent Events (SSE): Real-Time Push Without WebSocket Complexity

WebSockets provide bidirectional real-time communication but come with substantial operational complexity: sticky sessions in load-balanced environments, connection lifecycle management, firewall traversal challenges, and the cognitive overhead of managing a persistent bidirectional channel when you only actually need server-to-client push.

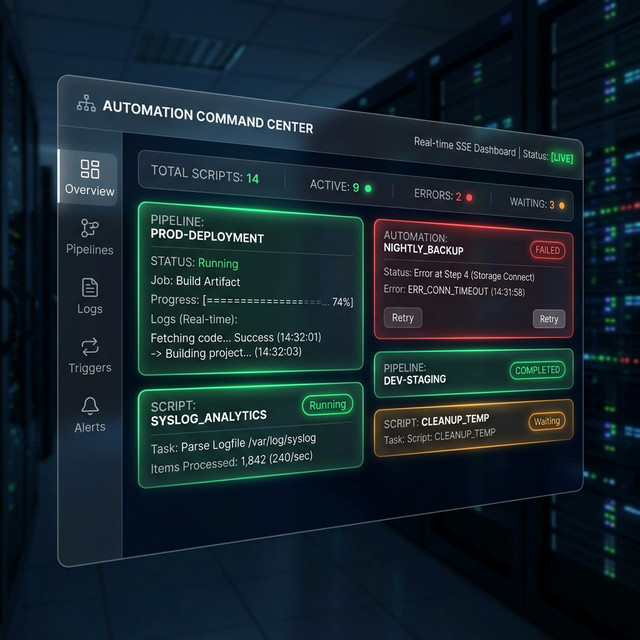

For the vast majority of real-time automation use cases — "show the user the status of their background job", "update the dashboard when a new lead arrives", "stream the output of an LLM response" — Server-Sent Events (SSE) are a superior architectural choice. SSE uses a standard HTTP connection with the MIME type "text/event-stream". The server holds the connection open and pushes newline-delimited events. The client receives them via the native EventSource API with zero additional library dependencies on modern browsers.

According to the MDN Web API documentation, SSE supports automatic reconnection natively, event IDs for resumable streams, and named event types for routing different event categories to different handlers. For automation pipeline status dashboards — a genuinely common enterprise requirement — SSE provides 90% of the capability of WebSockets with approximately 20% of the implementation complexity.

Webhook Endpoint Security: The Frequently Missed Step

Whether you expose a webhook endpoint via a traditional API route, a Server Action, or a dedicated edge function, the security validation pattern is the same — and it is, unfortunately, frequently omitted in development environments and left uncorrected in production.

Most automation platforms (n8n, Zapier, Make.com) sign their outgoing webhook payloads with an HMAC-SHA256 digest generated from a shared secret. The receiving endpoint must verify this signature before processing the payload. The verification algorithm is deterministic: compute the HMAC-SHA256 of the raw request body using the shared secret; compare the result to the signature transmitted in the webhook's header. If they match, the request is authentic. If they do not match, the request must be rejected immediately regardless of payload content — because an unsigned webhook is indistinguishable from a malicious injection attempt by an attacker who has discovered your endpoint URL.

Implementation in Node.js requires careful attention to one common bug: Node's built-in crypto HMAC functions operate on the raw bytes of the request body, not on a parsed JSON object. Many implementations inadvertently introduce subtle mismatches by parsing the body with express.json() middleware before the HMAC verification step — which changes the byte representation and causes valid signatures to fail. The correct sequence is: buffer the raw body bytes, verify HMAC, then parse JSON.

Cloudflare Workers for Edge-Native Webhooks

For organizations requiring globally distributed webhook ingestion with sub-10ms cold start times and no server provisioning overhead, Cloudflare Workers provide a compelling alternative to traditional backend infrastructure. Workers execute in Cloudflare's global network at the edge point closest to the webhook originator — potentially reducing round-trip latency by hundreds of milliseconds for international users.

A Cloudflare Worker receiving n8n webhooks will, in the overwhelming majority of cases, respond in under 30ms total. The Worker validates the HMAC signature, extracts and transforms the payload if required, and writes to a Cloudflare KV store or forwards to n8n's internal endpoint via Workers' native fetch API. The operational model is scale-to-zero by default: you pay only for actual invocations, and cold starts are measured in microseconds rather than the hundreds of milliseconds common with container-based serverless platforms.

Citations & Reference Sources

Want to implement this in your business?

Book a free discovery call with Pratik directly. We'll map out where AI-driven automation can generate the highest ROI in your existing processes.