In December 2025, NASA's Jet Propulsion Laboratory (JPL) achieved something remarkable: for the first time in human history, an autonomous artificial intelligence system planned the driving routes of a spacecraft on another planet. The AI in question was Anthropic's Claude, and the mission was guiding NASA's Perseverance rover across the surface of Mars.

This milestone matters not just to the space exploration community. For enterprise technologists, automation architects, and anyone building complex, multi-step AI workflows, this moment signals the transition from classical "if-this-then-that" automation into something far more powerful: agentic AI reasoning.

The Fundamental Problem: Communication Delays Make IFTTT Impossible

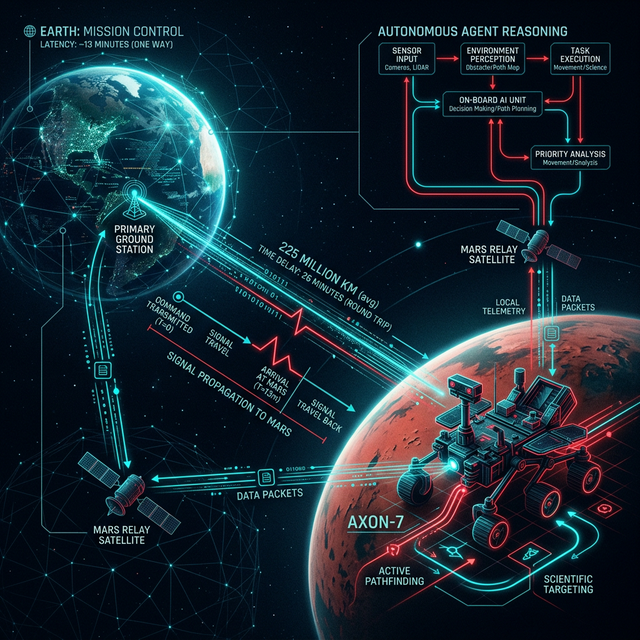

To understand why this breakthrough is so significant, you must first appreciate the brutal physics of Mars operations. The distance between Earth and Mars varies from 54 million to 401 million kilometres depending on orbital alignment. Radio waves travel at the speed of light, meaning communication delays between Earth and the rover range from approximately 4 to 24 minutes one way. A round-trip command-and-response cycle can take up to 48 minutes.

Under the classical automation paradigm, this is devastating. In traditional rule-based automation, if a rover's sensor detects an obstacle outside a predefined threshold, it halts and waits for human instruction. Those human instructions take nearly an hour to arrive. Meanwhile the rover sits idle on a planet 140 million miles away, burning expensive mission time and accumulating radiation exposure risk to its instrumentation.

The engineers at JPL had been aware of this bottleneck for years. What they needed was not faster rules — they needed a system capable of reasoning about context and making autonomous decisions within defined boundaries. That is precisely what agentic LLMs offer.

What JPL Actually Built: Claude as a Headless Reasoning Agent

The engineering implementation at JPL, as reported by R&D World, Space.com, and confirmed via Anthropic's own communications, centered on deploying Claude not as a conversational interface but as a headless API agent integrated into their planning pipeline. This architecture is directly analogous to the multi-agent orchestration architectures we deploy at Onezipp for enterprise clients.

The Technical Pipeline

Step 1 — Data Ingestion: Claude Code was provided access to 28 years of Mars mission data, including high-resolution orbital imagery from NASA's HiRISE (High Resolution Imaging Science Experiment) camera aboard the Mars Reconnaissance Orbiter, plus detailed digital elevation models (DEMs) of the Jezero Crater terrain.

Step 2 — Semantic Terrain Analysis: Claude analysed the visual and topographic data to identify mission-critical terrain features: bedrock outcroppings, hazardous boulder fields, unstable sand ripple formations, and slope gradients that could cause the rover to tip.

Step 3 — Route Generation in Rover Markup Language: Rather than outputting a human-readable description, Claude generated commands directly in Rover Markup Language (RML) — the same XML-based scripting language human planners use to send driving instructions to Mars rovers.

Step 4 — Digital Twin Validation: Before a single command was transmitted across 140 million miles of space, JPL engineers validated Claude's planned routes using a digital twin of the Perseverance rover — checking over 500,000 telemetry variables in simulation.

The results were extraordinary. The Perseverance rover successfully completed two AI-planned drives in Jezero Crater. The first, executed on December 8, 2025, covered 210 metres (689 feet). The second, on December 10, 2025, covered 246 metres (807 feet). JPL engineers reported that only minor adjustments were required after review — primarily refining a narrow corridor where ground-level images revealed sand ripples not clearly visible in the orbital data that Claude had accessed.

"Route planning time was cut in half. The engineer time that was previously dedicated to plotting step-by-step paths is now focused on reviewing and approving AI-generated plans." — R&D World, reporting on JPL's implementation.

Why This Is Not Science Fiction: The Architectural Parallels to Enterprise Automation

The patterns JPL deployed are not exotic. They are, in fact, the same architectural patterns that modern enterprise automation agencies are deploying today for mundane but equally mission-critical business workflows.

Consider the similarity: a JPL engineer cannot "fix" the rover's route in real-time because of communication distance. An enterprise workflow connecting 15 different SaaS platforms via API cannot rely on a human to manually re-route every edge case at 3am. In both scenarios, the requirement is identical: the system must be intelligent enough to handle ambiguity, make contextual decisions, and route around failure — autonomously, within a defined policy boundary.

According to Gartner, by 2028, approximately 33% of enterprise software applications will incorporate agentic AI capabilities — up from less than 1% in 2024. The AI agents market was valued at $5.9 billion in 2024 and is projected to exceed $50 billion by 2030, reflecting a compound annual growth rate of 47%.

The Concept of "Bounded Autonomy" and Why It Matters

One of the most important lessons from the JPL deployment is what engineers call bounded autonomy: the principle of granting an AI agent maximum operational freedom within a carefully prescribed boundary of acceptable action. Claude was never asked to modify the mission's scientific objectives. It was not authorized to override the rover's safety firmware. It was given a specific scope — plan a traverse from Point A to Point B — and executed within that scope with surgical precision.

This is the same design philosophy Onezipp employs when deploying agentic workflows for enterprise clients. An AI agent handling accounts payable may be authorized to extract invoice data, reconcile against purchase orders, and route discrepancies to human reviewers. It is explicitly not authorized to approve payments above a certain threshold without human-in-the-loop confirmation. The boundary defines the safety of the system.

From Deep Space to Enterprise: The Agentic Intelligence Transition

What the JPL experiment demonstrates most clearly is that the technology is ready. Agentic LLMs are not a future aspiration — they are a present-day operational reality, already trusted at the highest stakes level imaginable: the navigation of a $2.7 billion spacecraft on the surface of another planet.

For enterprise organizations still relying on brittle, template-driven automation workflows — where a changed email format or a new API response schema causes the entire pipeline to collapse — this moment should be a wake-up call. The cognitive layer that makes autonomous Mars navigation possible is the same cognitive layer that can make your contract management, customer onboarding, and financial reconciliation workflows intelligent, resilient, and genuinely hands-free.

Citations & Reference Sources

- R&D World: NASA's AI First on Mars — How Claude Planned Perseverance's 2025 Drives

- Space.com: NASA Used AI to Plan Mars Routes — A New Era for Autonomous Exploration

- Anthropic News: Claude Deployment Updates

- NASA: Artificial Intelligence Initiative

- Grand View Research: AI Agents Market Report — Projected $50B by 2030

Want to implement this in your business?

Book a free discovery call with Pratik directly. We'll map out where LLMs and robust automation can generate the highest ROI in your existing processes.