One of the most common architectural questions for engineering teams building production automation systems is where to draw the boundary between workflow orchestration and compute. n8n is an exceptional orchestration platform: it manages triggers, handles sequencing, provides error handling, integrates with hundreds of APIs through native nodes, and offers a visual debugging interface that dramatically reduces troubleshooting time. But it was not designed to be a general-purpose compute engine.

Some automation tasks benefit from being executed in purpose-built serverless compute environments rather than as inline n8n JavaScript. Tasks that require burst-scale processing, heavy CPU computation, long execution times, specific runtime environments, or security-sensitive operations involving data that should not traverse n8n's execution history, are candidates for offloading to AWS Lambda or similar platforms. Understanding when to make this architectural decision — and how to implement it correctly — is a meaningful determinant of production automation system reliability at scale.

AWS Lambda Scaling: What Changed in Late 2023

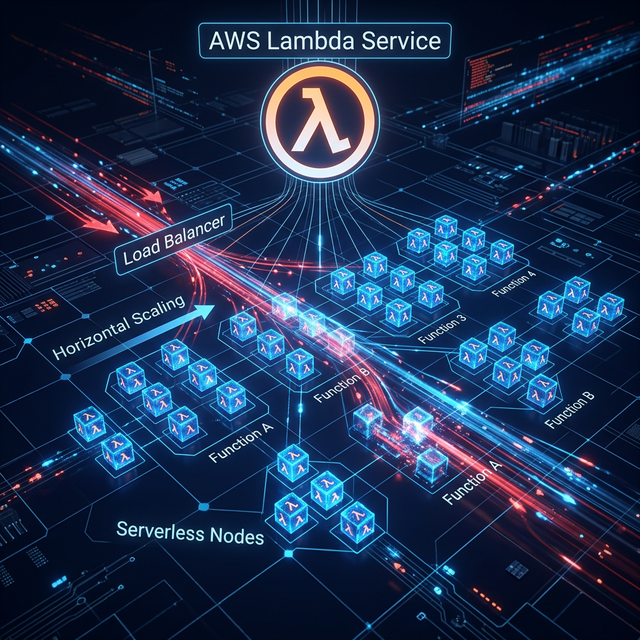

AWS significantly improved Lambda's scaling characteristics in late 2023. Each Lambda function now scales independently, adding up to 1,000 concurrent executions every 10 seconds. This represents approximately a 12x improvement in scaling velocity compared to previous limits. The practical implication for automation systems: a concurrent spike of 12,000 simultaneous workflow executions — the kind of load that might arrive from a bulk import event or an unexpected viral notification campaign — can now be handled by Lambda functions without the multi-minute scaling delay that previously characterized Lambda at peak concurrency.

For n8n architectures, this means that offloading burst computational tasks to Lambda no longer requires the cautious pre-warming strategies and reserved concurrency pools that were necessary workarounds before the 2023 improvements. The scaled-by-default behavior is now adequate for most enterprise automation workloads.

When to Keep Logic in n8n and When to Offload to Lambda

This is the central architectural decision, and it is worth mapping explicitly before designing a system.

n8n vs. Lambda: Decision Framework

Keep in n8n: API calls to third-party services (HTTP Request nodes); data routing and conditional branching; simple data transformation (JSON manipulation, field mapping); error handling, retry logic, and dead-letter queue processing; workflow state management and execution logging.

Offload to Lambda: PDF generation or transformation; image resizing, compression, or format conversion; ML model inference (if not using a managed API endpoint); large dataset processing that would exceed n8n's memory allocation; operations requiring specific runtime environments, native library dependencies, or isolated execution contexts; long-running tasks exceeding n8n's function node execution timeout; security-sensitive operations where the input/output data should not appear in n8n's execution history.

The Async Invocation Pattern with SQS

The most robust pattern for integrating Lambda into n8n workflows at high throughput is an asynchronous queue-based architecture using Amazon SQS. Rather than invoking Lambda directly and waiting synchronously for a response (which would block the n8n execution for the duration of the Lambda function's runtime), n8n writes the task payload to an SQS queue and immediately continues to the next workflow node.

Lambda is configured with an SQS event source mapping and processes batches of messages from the queue. When processing is complete, Lambda writes the result to a second SQS queue or a DynamoDB record. A separate n8n workflow, triggered on a polling schedule or by an SQS-to-n8n webhook bridge, picks up the completed results and continues the downstream processing.

This pattern provides several critical reliability properties: SQS provides at-least-once message delivery with configurable dead-letter queues for failed messages; Lambda processing failures do not cause the triggering n8n workflow to fail; and the system naturally absorbs traffic spikes through the SQS buffer, preventing Lambda concurrency limits from becoming a bottleneck.

Cold Start Mitigation Strategies

Lambda cold starts — the initialization delay when a new execution environment is spun up — remain a relevant concern for latency-sensitive automation pipelines. The post-2023 improvements reduced cold start frequency rather than cold start duration. A Lambda cold start for a Node.js function with moderate dependencies still takes 200-600ms; for a JVM-based function, it can exceed 2 seconds.

For n8n-integrated Lambda functions that are part of user-facing automation flows (where latency is observable), the mitigation options are:

- Provisioned Concurrency: Pre-warms a specified number of Lambda execution environments, essentially eliminating cold starts for that concurrency level. Costs approximately 15% more than standard Lambda pricing for the provisioned capacity. Appropriate for high-value, user-facing workflows where sub-100ms Lambda execution time is a requirement.

- Lambda SnapStart: For Java-based Lambda functions, SnapStart caches the post-initialization execution snapshot, reducing cold starts from 2+ seconds to ~150ms. Relevant for teams with existing Java Lambda functions they are integrating into n8n workflows.

- Function Design Optimization: Keep Lambda packages lean (no unnecessary dependencies), initialize expensive clients outside the handler function so they persist across warm invocations, and use Node.js runtime which has significantly lower cold start times than Python for equivalent package sizes.

n8n Queue Mode: The Internal Scaling Layer

Independent of Lambda integration, n8n's own Queue Mode architecture is the essential configuration for any n8n deployment processing more than a few hundred workflow executions per day. Without Queue Mode, the n8n main process handles both webhook ingestion and workflow execution synchronously — creating a processing bottleneck where a slow workflow execution blocks the ingestion of new incoming webhooks.

In Queue Mode, the n8n main instance handles only webhook ingestion and job creation, writing execution jobs to a Redis queue immediately upon receipt. Separate n8n worker processes poll the queue and execute workflows independently. Multiple worker instances can run simultaneously, scaling horizontally as execution volume increases. The n8n official documentation recommends this architecture for any production deployment, and Coolify makes it straightforward to deploy n8n in Queue Mode with a Redis instance and configurable worker count via environment variables.

Citations & Reference Sources

- AWS Lambda Documentation: Concurrency Scaling and Limits

- n8n Official Documentation: Scaling n8n in Queue Mode

- Cloudflare Workers: Platform Limits and Edge Compute Architecture

- AWS SQS: Simple Queue Service — Architecture Patterns for Async Processing

- Coolify: Self-Hosted PaaS Deployment Guide for n8n in Queue Mode

Want to implement this in your business?

Book a free discovery call with Pratik directly. We'll map out where AI-driven automation can generate the highest ROI in your existing processes.